Artificial intelligence in personnel selection: opportunities and limits

There are countless fields of application for artificial intelligence (AI) in personnel selection. The potential is enormous, and so is the number of AI-based HR products and services Rapidly. At the same time, the topic is still so new and often so complex that only a few non-computer scientists really feel safe using it.

Definition of artificial intelligence:

Artificial intelligence (English: artificial intelligence) is a branch of computer science that deals with the automation of intelligent human behavioural and concerned with machine learning (Wichert 2021)

It is therefore hardly surprising that many HR managers are still skeptical about the possibilities of modern AI and rarely focus on its use in their tried and tested methods Staff selection process car. This skepticism is reinforced by numerous products and services on the market that dubious promises Do and not the Minimum quality standards in personnel selection comply. Because in a market that primarily uses buzzwords such as Robot Recruiting or technical terms such as Machine Learning, Neural Networks, and Deep Learning seems to act, it is not easy for HR managers to assess the quality of providers and thus separate the “wheat from the chaff.” Because how can you judge the quality of an AI solution if you've never come into contact with it before?

AI in personnel selection — What is that anyway?

Do you recognize yourself in the description above? In this article, you will find the most important basic knowledge about AI in personnel selection bundled and summarized in an easy-to-understand way. We explain the most important terms you should know and clear up myths and dangerous half-knowledge about the topic. Let's focus on the most important term in the area of AI: machine learning.

Machine learning

Machine learning describes dynamic algorithms that are able to learn independently. Put simply, it's about learning from data. With increasing experience (i.e. with increasing training in data sets), performance in a specific task (e.g. predicting the professional success of applicants) should be improved. Supervised learning plays a central role in personnel selection — a sub-area of machine learning.

Supervised learning

Supervised learning can be Learn from examples be understood. In a typical scenario, we have a measurement of a dependent variable, (e.g. professional success), which we want to predict based on a series of characteristics (e.g. personality traits or abilities). In addition, we have a training data set in which we observe the dependent and independent variables for a range of objects (e.g. people). In the training data set, the algorithm should learn regularities in the data (e.g. a correlation between personality traits and professional success). For example, this could be a link between high levels of conscientiousness and professional success in the financial sector. We speak of supervised learning because there are correlations between the independent and dependent variables in the data set that are used to control (monitor) the learning process. As soon as the algorithm has observed relevant regularities, the predictive performance of these regularities is checked in a test data set. The fundamental question here is: Do the learned regularities provide reliable predictions even in an unknown data set? The quality of the algorithm is therefore measured by its ability to make correct predictions in an independent data set (e.g. predicting the professional success of applicants for a position as a financial advisor).

What can AI actually do and cannot do in personnel selection?

Supporting personnel selection through AI offers numerous options. Used correctly, it offers the potential to select personnel faster, cheaper, fairer, more objective and more valid to make. At the same time, it is important not to overestimate the potential of AI and to be aware of the limits and weaknesses of using algorithms. Because not everything that is written about AI is based on scientific findings.

Here are the 4 most important myths surrounding the fashion term AI in HR

Myth 1: AI replaces HR managers

The fear that AI could soon make HR managers superfluous is probably the most widespread myth in practice - and contributes not least to a high level of skepticism and low openness about the issue among HR managers. But the idea that algorithms could soon replace HR managers is simply unfounded. We'll tell you why!

Humans are good at understanding causalities.

Algorithms, on the other hand, are good at understanding correlations.

They're really good together.

Observing correlations in data is sufficient to make good personnel decisions (correlations) not off. It requires a Understanding causality, that is, of cause-effect relationship. Although the algorithm is good at identifying regularities, human intelligence is required to critically evaluate and classify them (derive causalities).

An example: The mere observation that there are more German white men in management positions says nothing about a causal relationship (i.e. that people are in management positions because they are white, German and male).

At the same time, it is difficult for people to identify regularities in very large amounts of data — this is more true than ever in a fast-moving business world in which we are increasingly dealing with a flood of data (big data) are confronted. Humans and algorithms are therefore only really good when they work together — because that's how we combine the best of two worlds.

Accordingly, AI is not about replacing the human intelligence of HR managers with the artificial intelligence of machines. Instead, the existing algorithms should help to process large amounts of data in such a way that HR managers can make the best possible decisions.

For this reason, at Aivy, we prefer the term Augmented Intelligence. The term does not imply the exchange of human intelligence, but rather clarifies the meaning of the interaction between man and machine. Intelligence, experience, and interpersonal interaction remain important—in fact, they're essential—but they're now backed by better and more robust data. This makes it easier for HR managers to make faster, more objective and therefore more cost-effective and equitable personnel decisions.

example: A personnel manager receives over 600 applications for an advertised position. She is faced with the challenge of inviting the best applicants to an interview. But which of the applicants have the highest potential and should therefore be invited? The decision is usually not easy — especially when applications only differ in nuances. At this point, it is worthwhile to compare the selection process in the Old World (human intelligence) and the New World (augmented intelligence).

“Old World”: Human Intelligence

- process: Manually review resumes

- Source of information: Classic personnel selection criteria from the curriculum vitae (e.g. school grades, universities)

- Focus: Past orientation (what have applicants done in the past?)

- Time factor: Several days. Proportional increase with number of applications

“New World” :Augmented Intelligence

- process: Self-learning algorithms

- Source of information: Large amounts of data on relationships between professional suitability and characteristics of applicants (e.g. personality traits, intelligence)

- Focus: Future orientation (what potential do applicants have in the future?)

- Time factor: A few hours. No significant increase in the number of applications

Final decision:

Human decision based on data and structured interview

The comparison shows:

The advantages of AI are particularly evident in today's increasingly fast-paced business world, where future orientation and time savings are more important than ever. Because the more our business world changes on a daily basis, the more important it becomes Development potential The review of past behavior is all the more meaningless. However, it is also important to consider what both processes still have in common: In the end, it is the person who makes the selection.

The difference therefore lies primarily in the information base. The quality of the hiring decision therefore depends to a large extent on the quality of the information base, i.e. on the data that is used to train the algorithm. However, the quality of the data often leaves something to be desired and the AI thus falls short of its promise of providing more objective and valid data. This brings us straight to myth number 2.

Myth 2: AI automatically leads to a more objective and fair selection of personnel.

Human decisions are often subject to distortions and unconscious biases (e.g. unconscious preference for applicants who are similar to themselves, dependence of the decision on the current mood). Algorithms therefore help to make more objective decisions — because the algorithm doesn't care what applicants look like or what the weather is like on the day of the job interview (also known as the “blindness” of the algorithm).

The use of algorithms guarantees a more objective selection of personnel — at least in theory and at first glance.

However, a second look at the topic reveals a more differentiated picture. Because the advantage of the “blindness” of machine-generated forecasts can also be a disadvantage. Algorithms therefore always assume that new data behaves in the same way as the data obtained by the algorithm to learn the laws. If the training data is purely backward-looking data (e.g. data on the current composition of companies), regularities can be learned that contribute to discrimination against minorities. After all, as the term minority already says, they are in the minority. It is therefore not surprising that an algorithm recognizes a higher probability that a white man without an immigrant background will rise to a management position than a black woman with an immigrant background — because this regularity is present in the data.

The result is a kind of self-fulfilling prophecy: The algorithm confirms its predictions and leads to a repetition of previous patterns, which, however, are already caused by discrimination against certain marginalized groups. This and many other examples therefore once again show the importance of the interplay between human and artificial intelligence. It is therefore important to take a close look at training data and critically analyse it on a possible discrimination against groups of applicants to examine.

On the one hand, training data should only include demographic aspects after careful review and possible correction. On the other hand, there is a potential-oriented and future-oriented focus (which competencies will be relevant in the profession in the future?) Instead of focusing purely on the past (what competencies did people in the position have had in the past).

This is also the reason why providers on the market who promise to evaluate CVs in just a few seconds with the help of artificial intelligence are viewed critically. This is because the information in CVs is inherently backwards, difficult to verify and often says little about applicants' future potential. In order to evaluate these, on the other hand, a scientifically based Aptitude diagnostics (e.g. in the form of appealing test procedures), which constantly optimizes the prediction of professional suitability using self-learning algorithms (continuous improvement process).

Myth 3: AI automatically leads to a stronger employer brand

Many employers now rely on advertising AI applications as part of their selection process — in the hope of becoming attractive employers to position talented young applicants (high potentials). However, recent studies show that the majority of young target groups are also skeptical about the use of AI.

One reason for this is often dubious and dubious offers from market providers and a lack of transparency about the use of applicant data. The selection process is increasingly becoming a “black box” — this is of course not conducive to trust. Accordingly, the results show: The Employer brand cannot be strengthened with buzzwords about AI alone. In order to establish a real argument for strengthening the employer brand, the specific use of AI, the processes and the quality criteria must be explained. Transparency is key. The application process must not and should not be a black box for applicants.

Myth 4: AI leads to savings — in the short and long term

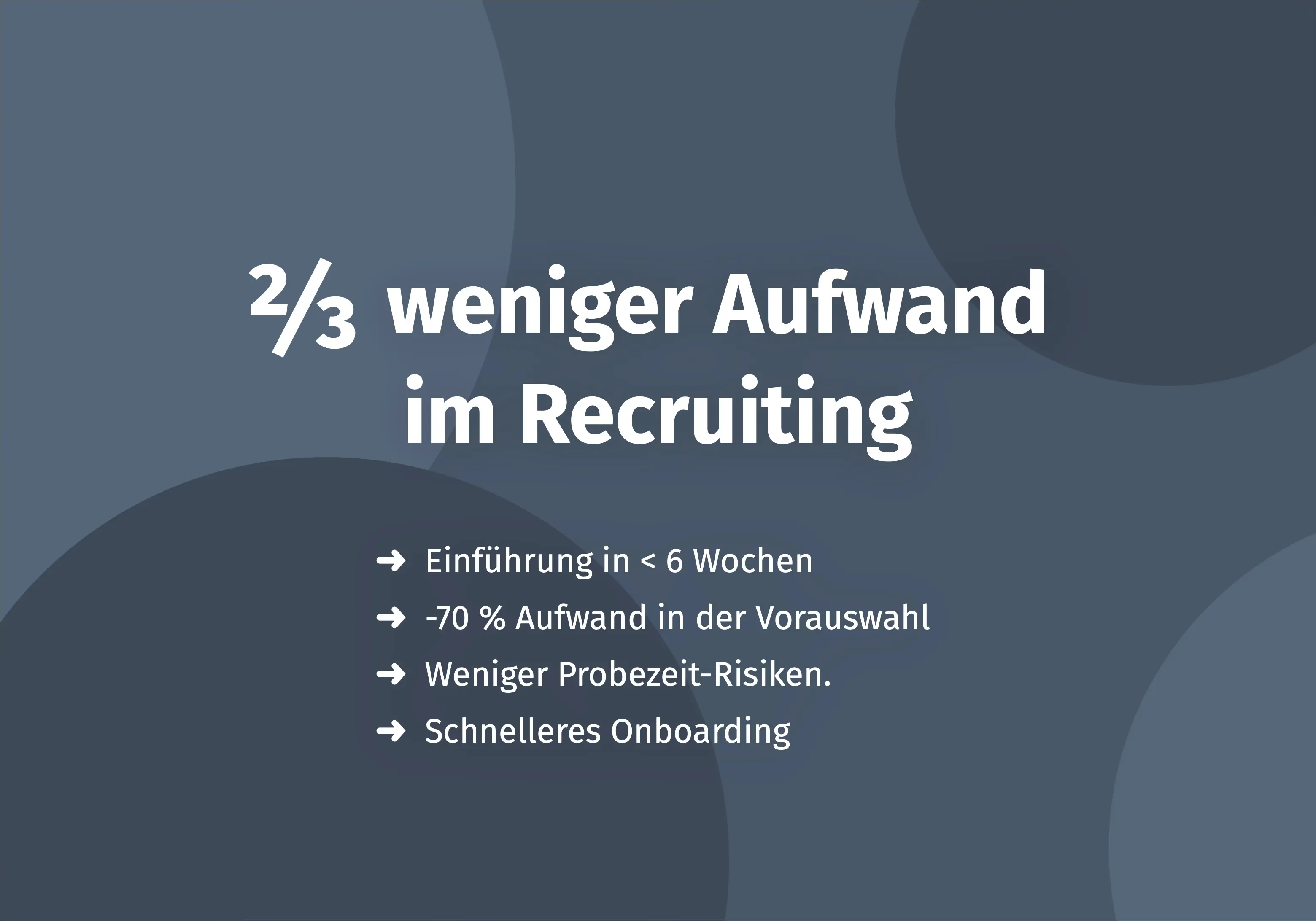

Using algorithms instead of manually scanning resumes. The potential to save personnel and time costs is obvious. AI therefore automatically seems to make personnel selection cheaper and faster. However, there is often disillusionment in HR departments following the introduction of AI. Because even though the potential for time and cost savings is enormous in the long term, this is often not the case in the short term.

The development of an AI-based selection program often requires large investments in the short term: Suitable data sources must be identified, algorithms must be trained, employees must be trained, and the development of a data-based culture in the company also takes time. Because only when everyone involved in the personnel selection process understands the advantages and limits of algorithms can their full potential be developed.

Accordingly, the introduction of AI is often more like a mammoth project than the hoped-for sprint. Nonetheless, doing nothing is also not an option. Because not dealing with the new opportunities offered by AI or even excluding them will have significant effects on the competitiveness of many companies in the near future. So the earlier you start building solid data structures, the greater your long-term competitive advantage over the competition will be.

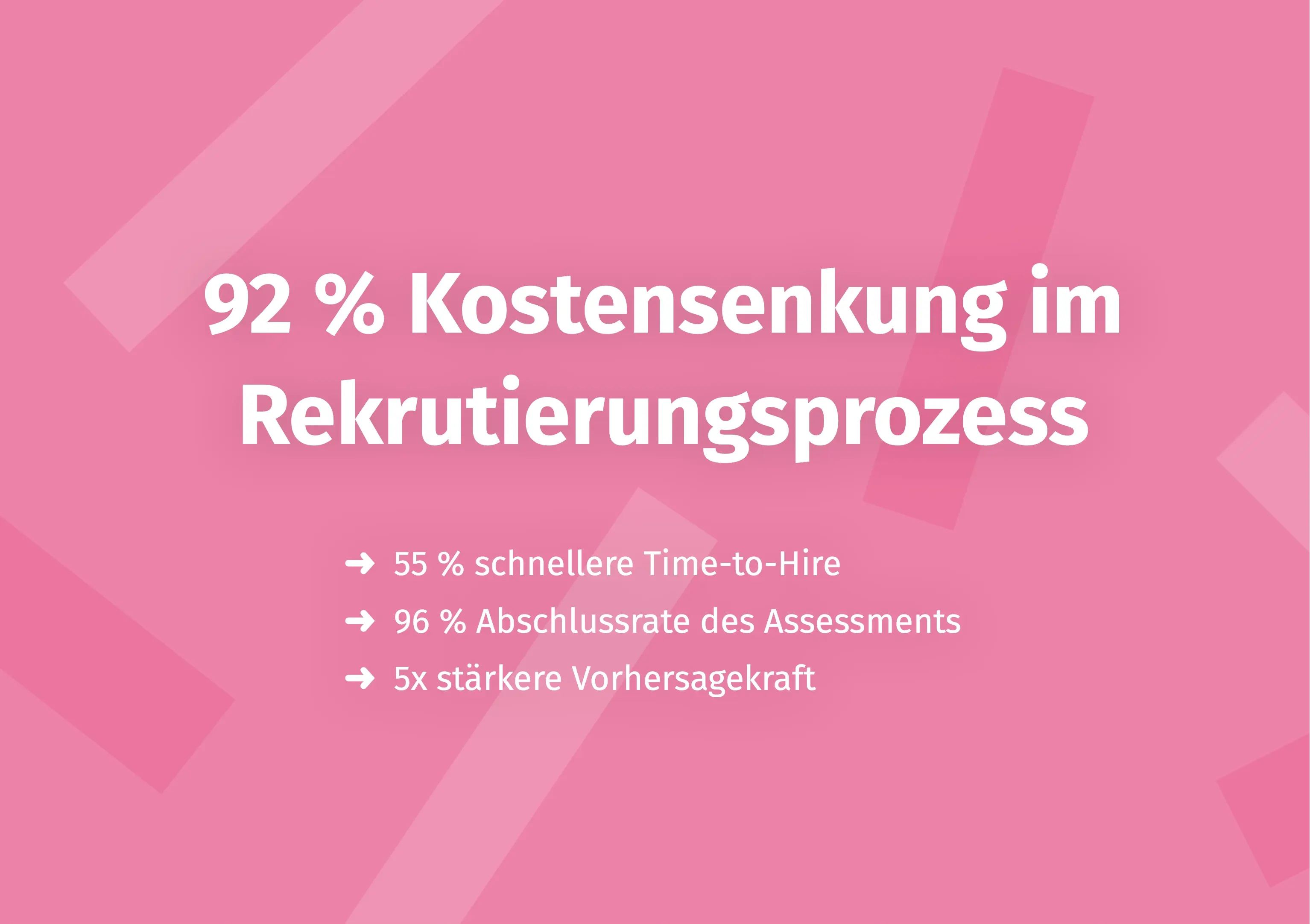

Make a better pre-selection — even before the first interview

In just a few minutes, Aivy shows you which candidates really fit the role. Beyond resumes based on strengths.